The trailer for the latest documentary I graded dropped yesterday. Check it out on Amazon Prime Video May 21st!

Space Jam: A New Legacy - Trailer 1

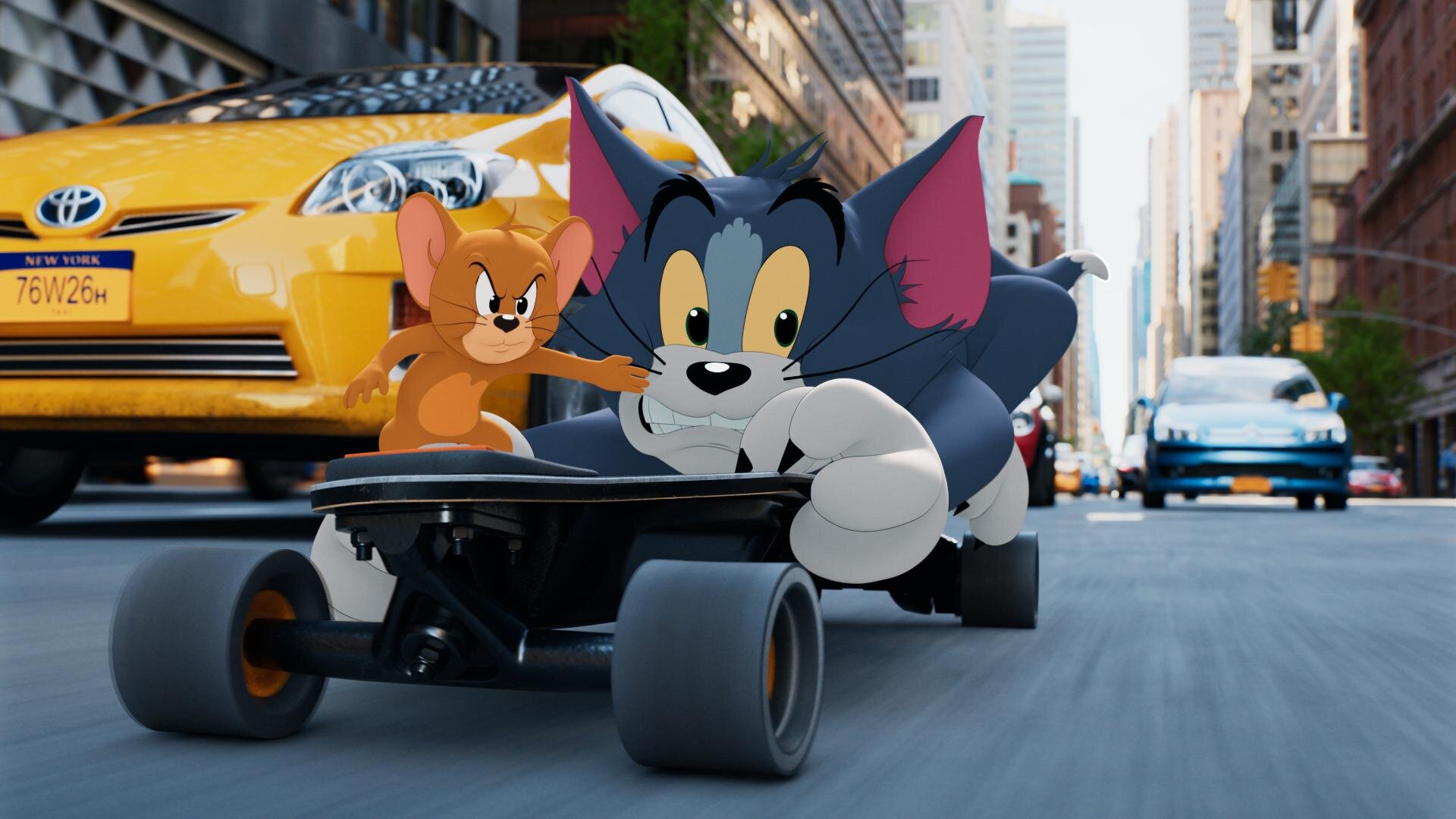

Tom and Jerry

Tom and Jerry: A global finish during a global pandemic.

“Tom and Jerry” is out today in theaters and on HBO Max! I’m excited to share how we built the color on two continents for these classic characters.

Communication

Arguably, the most crucial aspect of finishing this film is how Warner Bros Post Production Creative Services was able to support and complete this project during some of the most restrictive months of the pandemic. Key to the success of this was the communication between Burbank and London. Paul Lavoie(DI Manager), my long-time partner in crime, headed up the Burbank operations while Ann Lynch(Post Supervisor) and Alan Pritt(Post Production Manager DLL) kept everything moving along in London between editorial and VFX. All of this was over email, teams, and phone calls since travel was prohibited. The challenge here was keeping everyone on the same page between the two different timezones. Later on, we found out that would also be one of the project’s greatest strengths.

VFX

Framestore London did the VFX shots for the show. There were numerous versions of every shot, right up until delivery, and a couple after, you know… the usual. Framestore received ACES AP0 EXRs and returned the same back once work was complete with one key difference. Aside from the plates having beautiful animation when they returned they also acquired a number of matte channel layers in the EXR. Each character had mattes for their body, eyes, drybrush, and any props they were handling. This made for some extra prep prior to color, but it paid off big time when we started the grade.

Putting it Together

Conform and color prep was completed at DeLane Lea by Otto Rodd(DI Editor DLL). While we were sleeping in LA, Otto would cut in the night’s deliveries from VFX and connect all the mattes for use in the grade With the number of characters and the granularity of the mattes, I can’t begin to tell you what a herculean effort this was. At the end of his day, he would send the project files for the reels that were updated back to LA where Leo Ferrini(DI Editor) would then take over while Otto slept. Working in this way, we had a 24-hour work cycle with everyone working a normal day shift. There was a lot to do in those 24 hours. For starters, the organization that Otto and Leo imparted onto the timeline was second to none. Each character had its own layer. This layer stayed consistent throughout the whole film even if the character was not in the shot. Tom was on layer 100, jerry on 200, spike on 400, and so on. We didn’t necessarily use all the layers in between, but this kept the timeline nice and tidy. Simply put, we had a lot of layers with the number of characters and props in the film. In Protools sound speak, we used all 128 tracks.

Plate Grade

Character Grade

Covid Grading

Things were looking pretty bad on the covid front when we started the grade in early December. Numbers were spiking most likely due to thanksgiving gatherings and the situation was looking pretty bleak. Tim Story(Director), Chris DeFaria(Producer), and Peter Elliot(Editor) rotated supervising the grade since I was limited to only 2 creatives in the room at a time. We sent Baselight project files to DeLane Lea so that Alan Stewart(Cinematographer) and Frazer Churchill(VFX Supervisor) could review them in London. Working on hybrid animation is sort of like grading two separate films. We had the initial grade for the live-action plate. The direction for the show was bright, vibrant, and colorful. Next, each character got their pass. The characters had very specific targets. We treated them as if it was a cell animated cartoon. There was minimal interactive lighting on them. The idea was to keep the characters consistent throughout the whole film. To achieve this, I composited the VFX character back over my plate grade so that they were unaffected by the color of the live-action plate. Leo and Otto categorized each character's matte layer so that I could solo them out. In this way, it sort of works like a search in email. I could say “search” for all “Tom” shots and then the Baselight would return a reel of only shots that Tom was in. Then I would make corrections based on the character’s environment to ensure that they were perceived the same taking local color into account. This was a huge help in keeping the characters true to their looks throughout the film.

Theatrical Version

The color pipeline for the theatrical grade was ACES AP0 -> ACEScct (grading space) -> Show LMT -> P3D65. ACES 1.1 was used for the P3D65 ODT. We made a temp DCP once the final grade was approved to screen in a room large enough to have everyone together. The team chose to screen in the Ross theater on the WB lot, which was my first time back in a proper theater in ages. Now I know all theaters aren’t the Ross, but it did remind me how much I missed the theatrical experience. There really isn’t any substitute for best-in-class sound and picture exhibition. Hopefully, we can all be together at the cinema soon.

HDR Grading

HDR grading started immediately after the theatrical was locked. We had a tight turnaround since the picture would be available simultaneously in theaters and on HBO Max. The 1000nit p3D65 HDR grade was completed in three days. ACES helped in doing some of the heavy lifting regarding the basic tone mapping. I then came up with an additional HDR LMT to add a bit more contrast and rolled off the highlights softer than what the stock ACES curve was doing. The biggest challenge after that was correcting all the characters. Since the animated characters bypassed the show LMT, their values, especially in the eye whites, were very high for HDR. Again, being able to solo the characters was a huge help for this pass. Once completed, The filmmakers came in for trims and approval on two x300’s on opposite sides of the room to ensure proper social distancing. The Sony x300’s have a wide viewing angle, especially when compared to the newer x310s, but a color skew does appear when you sit too far off-axis. I wanted to make sure, that everyone had a straight shot at the display, even though we were spread apart. Having two was a nice luxury and I’m sure went a long way to making everyone feel safe, comfortable, and confident in the viewing setup.

SDR Trim

Next up was taking ten pounds of HDR and fitting it into the 8 pound rec709 bag. The Dolby trim was done with the same dual x300’s. One set up for 1000nit PQ p3D65 and the other setup for 100nit 709. Dolby analysis was calculated for every shot and then a base Dolby trim was applied as a starting point. I used the HDR and Theatrical passes as a reference and trimmed the SDR to best match given the limitations of a smaller gamut. Really the only bump I had here was the sky. In the theatrical P3 version, we had a very strong cyan sky that was meticulously worked on with shapes and keys to get as vibrant of a sky as possible. Once we put those values into 709 they landed out of gamut.

Blues twisting towards magenta as they reach the boundary of the 709 gamut.

Imagine a car headed straight for a brick wall. At some point, the driver needs to turn either left or right. I guess the other option would be to crash into the wall but in this analogy that would be clipping and we don’t want that. If the driver goes left he heads towards the magenta side of blue if he goes right he goes towards the cyan side of blue. Dolby in this case took our cyans and turned left. This resulted in a more magenta sky which didn’t look bad, it was just not as intended. To remedy this, I used the Dolby secondaries to swing the blue/cyan values away from magenta and back towards the original cyan. The trim took about a day and a half. Once completed we had one more approval session with only one note!

Correcting the blue values to twist towards cyan as they reach the boundary of the 709 gamut.

Deliverables

Next, it was time to create the masters. The Theatrical had a p3D65 2.6 gamma DSM created, along with an XYZ 2.6 gamma DCDM. We also archived an ACES master for future remastering, plus an un-graded ACES archival master for preservation. The HDR version was rendered out as 1000nit p3D65 PQ tiffs and then placed into a rec2020 gamut by Julio Meganes(color assistant) in MPI’s Media Ops department. A Dolby XML file containing the metadata recipe to get to the 709 color trims was exported from the Baselight. Julio imported that into the Transkoder and married it to the HDR picture. From there, the final audio was synced up and many sub-masters in various formats were created for servicing and distribution.

Thanks for Reading!

Thanks for taking the time to read about how the sausage was made. It was a truly collaborative effort leveraging the strengths of Warner Bros Post Production Creative Services’ global footprint. I couldn’t be happier with the way the project turned out and am excited to hear what y’all think in the comments below. Now grab the family, and some popcorn and go watch Tom and Jerry destroy the Royal Gate Hotel!.

Baselight Tips and Tricks

Hey everybody! Here is a video that Filmlight just released on their website. It’s a great series and I’m happy to have contributed my little bit. Let me know what you think.

The War With Grandpa

Hey everyone! Here is a project I graded prior to the shutdown. The picture is eyeing an October 9th release. Fingers crossed we are in a better place with COVID by then. Check out the link below.

Finishing Scoob!

“Scoob!” is out today. Check out my work on the latest Warner Animation title. Here are some highlights and examples of how we pushed the envelope of what is possible in color today.

Building the Look

ReelFX was the animation house tasked with bringing “Scoob!” from the boards to the screen. I had previously worked with them on “Rock Dog” so there was a bit of a shorthand already in place. I already had a working understanding of their pipeline and the capabilities of their team. When I came on-board, production was quite far along with the show look. Michael Kurinsky (Production Designer) had already been iterating through versions addressing lighting notes from Tony Cervone (Director) through a LUT that ReelFX had created. This was different from “Smallfoot” where I had been brought on during lighting and helped in the general look creation from a much earlier stage. The color pipeline for “Scoob!” was Linear Arri Wide Gamut EXR files -> Log C Wide Gamut working space ->Show LUT -> sRGB/709. Luckily for me, I would have recommended something very similar. One challenge was the LUT was only a forward transform with no inverse and only built for rec.709 primaries. We needed to recreate this look targeting P3 2.6 and ultimately rec.2020 PQ.

Transform Generation

Those of you that know me, know that I kind of hate LUTs. My preference is to use curves and functional math whenever possible. This is heavier on the GPUs but with today’s crop of ultra-fast processing cards, it hardly matters. So, my first step was to take ReelFX’s LUT and match the transform using curves. I went back and forth with Mike Fortner from ReelFX until we had an acceptable match.

My next task was to take our new functional forward transform and build an inverse. This is achieved by finding the delta from a 1.0 slope and multiplying that value by a -1. Inverse transforms are very necessary for today’s deliverable climate. For starters, you will often receive graphics, logos, and titles in display referred spaces such as P3 2.6 or rec.709. The inverse show LUT allows you to place these into your working space.

Curve and it’s inverse function

After the Inverse was built, I started to work on the additional color spaces I would be asked to deliver. This included the various forward transforms to p3 2.6 for theatrical, rec.2020 limited to P3 with a PQ curve for HDR, and rec.709 for web/marketing needs. I took all of these transforms and baked them into a family DRT. This is a feature in Baselight where the software will automatically use the correct transform based on your output. A lot of work up front, but a huge time saver on the back end; plus less margin for error since it is set programmatically.

Trailers First

The first piece that I colored with the team were the trailers. This was great since it afforded us the opportunity to start developing workflows that we would use on the feature.

My friend in the creative marketing world once said to me “I always feel like the trailer is used as the test.” That’s probably because the trailer is the first picture that anybody will see. You need to make sure it’s right before it’s released to the world.

Conform

Conform is one aspect of the project where we blazed new paths. It’s common to have 50 to 60 versions of a shot as it gets ever more refined and polished through the process. This doesn’t just happen in animation. Live-action shows with lots of VFX (read: photo-real animation) go through this same process.

We worked with Filmlight to develop a workflow where the version tracking was automated. In the past, you would need an editor to be re-conforming or hand dropping in shots as new versions came in. On “Scoob!”, a database was queried and the correct shot if available was automatically placed in the timeline. Otherwise, if not available, the machine would use the latest version delivered to keep us grading until the final arrives. This saves a huge amount of time (read: money).

Grading

Coloring for animation

I often hear, “It’s animation… doesn’t it come to you already correct?” Well, yes and no. What we do in the bay for animation shows is color enhancement; not color correction. Often, we are taking what was rendered and getting it that last mile to where the Director, Production Designer, and Art Director envisioned the image to be.

This includes windows and lighting tricks to direct the eye and enhance the story. Also, the use of secondaries to further stretch the distance between two complementary colors, effectively adding more color contrast. Speaking of contrast, it was very important to Tony, that we never were too crunchy. He always wanted to see into the blacks.

These were the primary considerations when coloring “Scoob!” Take what is there and make it work the best it can to promote the story the director is telling. Which takes me to my next tool and technique that was used extensively.

Deep Pixels and Depth Mattes

I’ve always said, if you want to know what we will be doing in color five years from now, look at what VFX is doing today. Five years ago in VFX deep pixels or voxels as they are sometimes referred, was all the rage. Today they are a standard part of any VFX or Animation pipeline. Often they are thrown away because color correctors either couldn’t use them or it was too cumbersome. Filmlight has recently developed tools that allow me to take color grading to a whole other dimension.

A standard pixel has 5 values R,G,B and XY. A Voxel has 6 values RGB and XYZ. Basically for each pixel in a frame, there is another value that describes where it is in space. This allows me to “select” a slice of the image to change or enhance.

This matte also works with my other 2D qualifiers turning my circles and squares into spheres and cubes. This allows for corrections like “more contrast but only to the foreground” or desaturate the character behind Scooby, but in front of Velma.

Using the depth mattes along with my other traditional qualifiers all but eliminated the need for standard alpha style mattes. This not only saves a ton of time in color since I’m only dealing with one matte but also generates savings in other departments. For example with fewer mattes, your EXR file size is substantially smaller, saving on data management costs. Additionally, on the vendor side, ReelFX only had to render one additional pass for color instead of a matte per character. Again, a huge saving of resources.

I’m super proud of what we were able to accomplish on “Scoob!” using this technique and I can’t wait to see what comes next as this becomes standard for VFX deliveries. A big thank you to ReelFX for being so accommodating to my mad scientist requests.

Corona Time

Luckily, we were done with the theatrical grade before the pandemic hit. Unfortunately, we were far from finished. We were still owed the last stragglers from ReelFX and had yet to start the HDR grade.

Remote Work

We proceeded to set up a series of remote options. First, we set up a calibrated display at Kurinsky’s house. Next, I upgraded my connection to my home color system to allow for faster upload speeds. A streaming session would have been best but we felt that would put too many folks in close contact since it does take a bit of setup. Instead, I rendered out high-quality Prores XQ files. Kurinsky would then give notes on the reels over Zoom or email. I would make changes, rinse and repeat. For HDR, Kurinsky and I worked off a pair of x300s. One monitor was set for 1000nit rec.2020 PQ and the other for the 100nit 709 Dolby trim pass. I also made H.265 files that would play off a thumb drive once plugged into an LG E-series OLED. Finally, Tony approved the 1.78 pan and scan in the same way.

I’m very impressed with how the whole team managed to not only complete this film but finish it to the highest standards under incredibly trying times. An extra big thank you to my right-hand man Leo Ferrini who was nothing but exceptional during this whole project. Also, my partner in crime, Paul Lavoie, whom I have worked with for over 20 years. Even though he was at home, it felt like he was right there with me. Another big thanks.

Check Out the Work

Check out the movie at the link below and tell me what you think.

Thanks for reading!

-John Daro

How To - AAF and EDL Export

AAF and EDL Exporting for Colorists

Here is a quick howto on exporting AAFs and EDLs from an Avid bin. Disclaimer - This is for colorists, not editors!

Exporting an AAF:

First, open your project. Be sure to set the frame rate correctly if you are starting a new project or importing a bin from another.

Next, open the bin that contains the sequence you want to export an AAF from.

Select the timeline and right click it. This sequence should already be cleaned for color. Meaning, camera source on v1, opticals on v2, speed fx on v3, vfx on v4, titles and graphics on v5.

After you right click, go “Output” -> “Export to File”

Navigate to the path that you want to export to.. Then, click “Options”

In the “Export As” pulldown select “AAF.” Next, un-check “Include Audio Tracks in Sequence” and make sure “Export Method:” is set to “Link to(Don’t Export) Media.” Then click “Save” to save the settings and return to the file browser.

Give the AAF a name and hit “Save.” That’s it! Your AAF is sitting on the disk now.

Exporting an EDL:

We should all be using AAFs to make our conform lives easier, but if you need an EDL for a particular piece of software or just want something that is easily read by a human, here you go.

Setup the project and import your bin the same as an AAF. Instead of right-clicking on the sequence, go -> “Tools” -> “List Tool” and it will open a new window. I’m probably dating my self, but back in my day, this was called “EDL Manager.” List Tool is a huge improvement since it lets you export multi-track EDLs quickly.

Select “File_129” from the “Output Format:” pull-down. This sets the tape name to 129 characters (128+0 =129) which is the limit for a filename in most operating systems. Next, click the tracks you want to export.

Double-click your sequence in the bin to load your timeline into the record monitor. Then click “Load” in the “List Tool” window. At this point, you can click “Preview” to see your EDL in the “Master EDL” tab. To save, click the “Save List” pull-down and choose “To several files.” This option will make one EDL per video track. Choose your file location in the browser and hit save. That’s it. Your EDLs are ready for conforming or notching.

Alternate Software EDL Export

That’s great John, but what if I’m using something else other than Avid?

Here are the methods for EDL exports in Filmlight’s Baselight, BMD DaVinci Resolve, Adobe Premiere Pro, and SGO Mistika in rapid-fire. If you are using anything else… please stop.

Baselight

Open “Shots” view(Win + H) and click the gear pull-down. Next click “Export EDL.” The exported EDL will respect any filters you may have in “Shots” view, which makes it a very powerful tool, but also something to keep an eye on.

Resolve

In the media manager, right-click your timeline and select “Timelines“ -> “Export“ -> “AAF/XML/EDL“

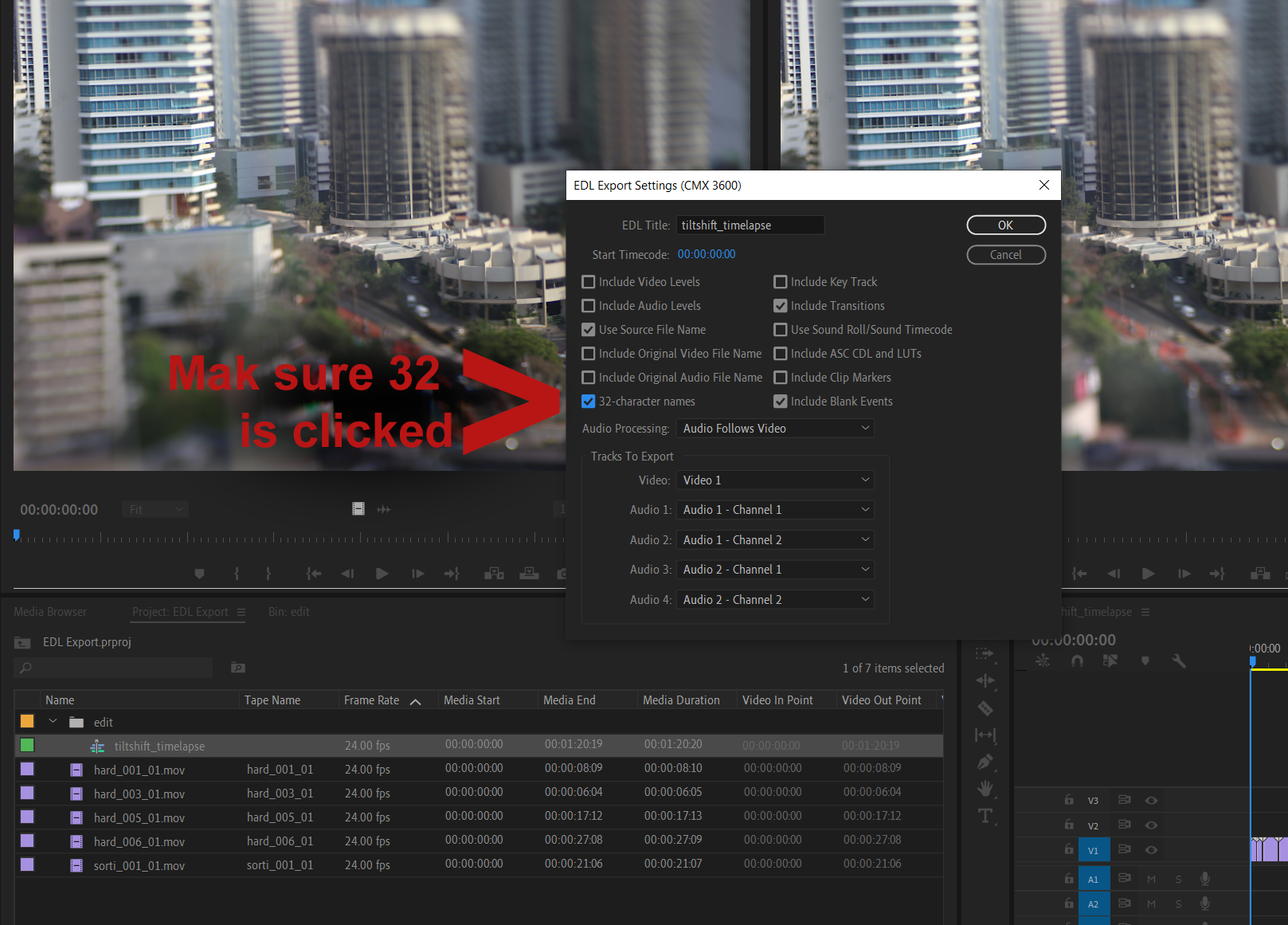

Premiere Pro

Make sure your “Tape Name” column is populated.

Make sure to have your Timeline selected. Then go, “File“ -> “Export“ -> “EDL“

The most important setting here is “32 character names.” Sometimes this is called “File32” in other software. Checking this insures the file name in it’s entirety(as long as it’s not longer then 32 characters) will be placed into the tape id location of the EDL

Mistika

Set your marks in the Timespace where you want the EDL to begin and end. Then select “Media“ -> “Output“ -> “Export EDL2” -> “Export EDL.“ Once pressed you will see a preview of the EDL on the right.

No matter what the software is, the same rules apply for exporting.

Clean your timeline of unused tracks and clips.

Ensure that your program has leader and starts at an hour or ##:59:52:00

Camera source on v1, Opticals on v2, Speed FX on v3, VFX on v4, Titles and Graphics on v5

Many of us are running lean right now. I hope this helps the folks out there who are working remotely without support and the colorists who don’t fancy editorial or perhaps haven’t touched those tools in a while.

Happy Grading!

JD

Brockmire Season 4 Starts Today

Supervised from pre-production through delivery. A total binge-worthy show. Perfect for these crazy times. A great example of collaboration between Atlanta and Burbank facilities.

HDR - Flavors and Best Practices (Copy)

Better Pixels.

Over the last decade we have had a bit of a renaissance in imaging display technology. The jump from SD to HD was a huge bump in image quality. HD to 4k was another noticeable step in making better pictures, but had less of an impact from the previous SD to HD jump. Now we are starting to see 8k displays and workflows. Although this is great for very large screens, this jump has diminishing returns for smaller viewing environments. In my opinion, we are to the point where we do not need more pixels, but better ones. HDR or High Dynamic Range images along with wider color gamuts are allowing us to deliver that next major increase in image quality. HDR delivers better pixels!

Stop… What is dynamic range?

When we talk about the dynamic range of a particular capture system, what we are referring to is the delta between the blackest shadow and the brightest highlight captured. This is measured in Stops typically with a light-meter. A Stop is a doubling or a halving of light. This power of 2 way of measuring light is perfect for its correlation to our eyes logarithmic nature. Your eyeballs never “clip” and a perfect HDR system shouldn’t either. The brighter we go the harder it becomes to see differences but we never hit a limit.

Unfortunately digital camera senors do not work in the same way as our eyeballs. Digital sensors have a linear response, a gamma of 1.0 and do clip. Most high-end cameras convert this linear signal to a logarithmic one for post manipulation.

I was never a huge calculus buff but this one thought experiment has served me well over the years.

Say you are at one side of the room. How many steps will it take to get to the wall if each time you take a step, the step is half the distance of your last. This is the idea behind logarithmic curves.

It will take an infinite number of steps to reach the wall, since we can always half the half.

Someday we will be able to account for every photon in a scene, but until that sensor is made we need to work within the confines of the range that can be captured

For example if the darkest part of a sampled image are the shadows and the brightest part is 8 stops brighter, that means we have a range of 8 stops for that image. The way we expose a sensor or a piece of celluloid changes based on a combination of factors. This includes aperture, exposure time and the general sensitivity of the imaging system. Depending on how you set these variables you can move the total range up or down in the scene.

Let’s say you had a scene range of 16 stops. This goes from the darkest shadow to direct hot sun. Our imaging device in this example can only handle 8 of the 16 present stops. We can shift the exposure to be weighted towards the shadows, the highlights, or the Goldilocks sweet spot in the middle. There is no right or wrong way to set this range. It just needs to yield the picture that helps to promote the story you are trying to tell in the shot. A 16bit EXR file can handle 32 stops of range. Much more than any capture system can deliver currently.

Latitude is how far you can recover a picture from over or under exposure. Often latitude is conflated with dynamic range. In rare cases they are the same but more often than not your latitude is less then the available dynamic range.

Film, the original HDR system.

Film from its creation always captured more information than could be printed. Contemporary stocks have a dynamic range of 12 stops. When you print that film you have to pick the best 8 stops to show via printing with more or less light. The extra dynamic range was there in the negative but was limited by the display technology.

Flash forward to our digital cameras today. Cameras form Arri, Red, Blackmagic, Sony all boast dynamic ranges over 13 stops. The challenge has always been the display environment. This is why we need to start thinking of cameras not as the image creators but more as the photon collectors for the scene at the time of capture. The image is then “mapped” to your display creatively.

Scene referred grading.

The problem has always been how do we fit 10 pounds of chicken into an 8 pound bag? In the past when working with these HDR camera negatives we were limited to the range of the display technology being used. The monitors and projectors before their HDR counterparts couldn’t “display” everything that was captured on set even though we had more information to show. We would color the image to look good on the device for which we were mastering. “Display Referred Grading,” as this is called, limits your range and bakes in the gamma of the display you are coloring on. This was fine when the only two mediums were SDR TV and theatrical digital projection. The difference between 2.4 video gamma and 2.6 theatrical gamma was small enough that you could make a master meant for one look good on the other with some simple gamma math. Today the deliverables and masters are numerous with many different display gammas required. So before we even start talking about HDR, our grading space needs to be “Scene Referred.” What this means is that once we have captured the data on set, we pass it through the rest of the pipeline non-destructively, maintaining the relationship to the original scene lighting conditions. “No pixels were harmed in the making of this major motion picture.” is a personal mantra of mine.

I’ll add the tone curve later.

There are many different ways of working scene-referred. the VFX industry has been working this way for decades. The key point is we need to have a processing space that is large enough to handle the camera data without hitting the boundaries i.e. clipping or crushing in any of the channels. This “bucket” also has to have enough samples (bit-depth) to be able to withstand aggressive transforms. 10-bits are not enough for HDR grading. We need to be working in a full 16-bit floating point.

This is a bit of an exaggeration, but it illustrates the point. Many believe that a 10 bit signal is sufficient enough for HDR. I think for color work 16 bit is necessary. This ensures we have enough steps to adequately describe our meat and potatoes part of the image in addition to the extra highlight data at the top half of the code values.

Bit-depth is like butter on bread. Not enough and you get gaps in your tonal gradients. We want a nice smooth spread on our waveforms.

Now that we have our non destructive working space we use transforms or LUTs to map to our displays for mastering. ACES is a good starting point for a working space and a set of standardized transforms, since it works scene referenced and is always non destructive if implemented properly. The gist of this workflow is that the sensor linearity of the original camera data has been retained. We are simply adding our display curve for our various different masters.

Stops measure scenes, Nits measure displays.

For measuring light on set we use stops. For displays we use a measurement unit called a nit. Nits are a measure of peak brightness not dynamic range. A nit is equal to 1 cd/m2. I’m not sure why there is two units with different nomenclature for the same measurement, but for displays we use the nit. Perhaps candelas per meter squared, was just too much of a mouthful. A typical SDR monitor has a brightness of 100 nits. A typical theatrical projector has a brightness of 48 nits. There is no set standard for what is considered HDR brightness. I consider anything over 600nits HDR. 1000nits or 10 times brighter than legacy SDR displays is what most HDR projects are mastered to. The Dolby Pulsar monitor is capable of displaying 4000 nits which is the highest achievable today. The PQ signal accommodates values up to 10,000 nits

The Sony x300 has a peak brightness of 1000 nits and is current gold standard for reference monitors.

The Dolby Pulsar is capable of 4000 nit peak white

P-What?

Rec2020 color primaries with a D65 white point

The most common scale to store HDR data is the PQ Electro-Optical Transfer Function. PQ stands for perceptual quantizer. the PQ EOTF was standardized when SMPTE published the transfer function as SMPTE ST 2084. The color primaries most often associated with PQ are rec2020. BT.2100 is used when you pair the two, PQ transfer function with rec2020 primaries and a D65 white point. This is similar to how the definition of BT.1886 is rec709 primaries with an implicit 2.4 gamma and a D65 white point. It is possible to have a PQ file with different primaries than rec2020. The most common variance would be P3 primaries with a D65 white point. Ok, sorry for the nerdy jargon but now we are all on the same page.

HDR Flavors

There are four main HDR flavors in use currently. All of them use a logarithmic approach to retain the maxim amount of information in the highlights.

Dolby Vision

Dolby Vision is the most common flavor of HDR out in the field today. The system works in three parts. First you start with your master that has been graded using the PQ EOTF. Next you “analyse“ the shots in in your project to attach metadata about where the shadows, highlights and meat and potatoes of your image are sitting. This is considered layer 1 metadata. Next this metadata is used to inform the Content Mapping Unit or CMU how best to “convert” your picture to SDR and lower nit formats. The colorist can “override” this auto conversion using a trim that is then stored in layer 2 metadata commonly referred to as L2. The trims you can make include lift gamma gain and sat. In version 4.0 out now, Dolby has given us the tools to also have secondary controls for six vector hue and sat. Once all of these settings have been programmed they are exported into an XML sidecar file that travels with the original master. Using this metadata, a Dolby vision equipped display can use the trim information to tailor the presentation to accommodate the max nits it is capable of displaying on a frame by frame basis.

HDR 10

HDR 10 is the simplest of the PQ flavors. The grade is done using the PQ EOTF. Then the entire show is analysed. The average brightness and peak brightness are calculated. These two metadata points are called MaxCLL - Maximum Content Light Level and MaxFALL - Maximum Frame Average Light Level. Using these a down stream display can adjust the overall brightness of the program to accommodate the displays max brightness.

HDR 10+

HDR 10+ is similar to Dolby Vision in that you analyse your shots and can set a trim that travels in metadata per shot. The difference is you do not have any color controls. You can adjust points on a curve for a better tone map. These trims are exported as an XML file from your color corrector.

HLG

Hybrid log gamma is a logarithmic extension of the standard 2.4 gamma curve of legacy displays. The lower half of the code values use 2.4 gamma and the top half use log curve. Combing the legacy gamma with a log curve for the HDR highlights is what makes this a hybrid system. This version of HDR is backwards compatible with existing display and terrestrial broadcast distribution. There is no dynamic quantification of the signal. The display just shows as much of the signal as it can.

Deliverables

Deliverables change from studio to studio. I will list the most common ones here that are on virtually every delivery instruction document. Depending on the studio, the names of these deliverables will change but the utility of them stays the same.

PQ 16-bit Tiffs

This is the primary HDR deliverable and derives some of the other masters on the list. These files typically have a D65 white point and are either Rec2020 or p3 limited inside of a Rec2020 container.

GAM

The Graded Archival Master has all of the color work baked in but does not have the any output transforms. This master can come in three flavors all of which are scene referred;

ACES AP0 - Linear gamma 1.0 with ACES primaries, sometimes called ACES prime.

Camera Log - The original camera log encoding with the camera’s native primaries. For example, for Alexa, this would be LogC Arri Wide Gamut.

Camera Linear - This flavor has the camera’s original primaries with a linear gamma 1.0

NAM

The non-graded assembly master is the equivalent of the VAM back in the day. It is just the edit with no color correction. This master needs to be delivered in the same flavor that your GAM was.

ProRes XQ

This is the highest quality ProRes. It can hold 12-bits per image channel and was built with HDR in mind.

Dolby XML

This XML file contains all of the analysis and trim decisions. For QC purposes it needs to be able to pass a check from Dolby’s own QC tool Metafier.

IMF

Inter-operable Master Format files can do a lot. For the scope of this article we are only going to touch on the HDR delivery side. The IMF is created from an MXF made from jpeg 2000s. The jp2k files typically come from the PQ tiff master. It is at this point that the XML file is married with picture to create one nice package for distribution.

Near Future

Currently we master for theatrical first for features. In the near future I see the “flippening” occurring. I would much rather spend the bulk of the grading time on the highest quality master rather than the 48nit limited range theatrical pass. I feel like you get a better SDR version by starting with the HDR since you have already corrected any contamination that might have been in the extreme shadows or highlights. Then you spend a few days “trimming” the theatrical SDR for the theaters. The DCP standard is in desperate need of a refresh. 250Mbps is not enough for HDR or high resolution masters. For the first time in film history you can get a better picture in your living room than most cinemas. This of course is changing and changing fast.

Sony and Samsung both have HDR cinema solutions that are poised to revolutionize the way we watch movies. Samsung has their 34 foot onyx system which is capable of 400nit theatrical exhibition. You can see a proof of concept model in action today if you live in the LA area. Check it out at the Pacific Theatres Winnetka in Chatsworth.

Sony has, in my opinion, the wining solution at the moment. They have a their CLED wall which is capable of delivering 800 nits in a theatrical setting. These types of displays open up possibilities for filmmakers to use a whole new type of cinematic language without sacrificing any of the legacy story telling devices we have used in the past.

For example, this would be the first time in the history of film where you could effect a physiologic change to the viewer. I have often thought about a shot I graded for The “Boxtrolls” where the main character, Eggs, comes out from a whole life spent in the sewers. I cheated an effect where the viewers eyes were adjusting to a overly bright world. To achieve this I cranked the brightness and blurred the image slightly . I faded this adjustment off over many shots until your eye “adjusted” back to normal. The theatrical grade was done at 48nits. At this light level, even at it’s brightest the human eye is not iris’ed down at all, but what if I had more range at my disposal. Today I would crank that shot until it made the audiences irises close down. Then over the next few shots the audience would adjust back to the “new brighter scene and it would appear normal. That initial shock would be similar to the real world shock of coming from a dark environment to a bright one.

Another such example that I would like to revisit is the myth of “L’Arrivée d’un train en gare de La Ciotat.” In this early Lumière picture a train pulls into a station. The urban legend is that this film had audiences jumping out of their seats and ducking for cover as the train comes hurling towards them. Imagine if we set up the same shot today but in a dark tunnel. We could make the head light so bright in HDR that coupled with the sound of a rushing train would cause most viewers, at the very least, to look away as it rushes past. A 1000 nit peak after your eyes have been acclimated to the dark can appear shockingly bright.

I’m excited for these and other examples yet to be created by filmmakers exploring this new medium. Here’s to better pixels and constantly progressing the art and science of moving images!

Please leave a comment below if there are points you disagree with or have any differing views on the topics discussed here.

Thanks for reading,